Have you ever taken a quick photo of a vast landscape like the Grand Canyon – that’s what sampling is like.

Even this panorama does not capture all of the Grand Canyon, but it allows us to make an educated guess about what we cannot see. In statistics, sampling allows us to make an educated guess about an entire group based on a smaller part we can more easily study. Sampling helps us save time and money while still getting information that we can use to understand the whole group better. Let’s uncover the secrets of sampling together!

What is Sampling

A well-chosen sample is like a mirror: A carefully selected sample reflects the characteristics of the whole group very closely. It helps us reduce mistakes in our estimates about the entire group, known as the population.

Sampling sets the stage for inferential statistics: Once we have our sample, we can make educated guesses about the whole group using inferential statistics. It’s like solving a puzzle – the sample provides us with clues to understand the larger picture.

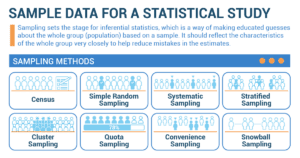

Sampling Methods

Think of a census like a roll-call where you collect data from everyone in the group, known as the population. A census shines when it’s possible and practical to include everyone in your study. For example, if you were investigating the favorite ice cream flavors of all employees in your office, conducting a census would involve asking each and every employee for their preferred flavor.

- Benefits: A census counts everyone in a population, giving us accurate information about everyone. We can learn about the whole group and make precise conclusions.

- Limitations: Doing a census takes a lot of time, money, and effort. It can be hard to reach everyone or get them to answer all the questions.

Imagine picking names out of a hat. That’s exactly what simple random sampling is like. In this method, each member of the group has an equal chance of being selected. Simple random sampling works best when everyone in your group is pretty much alike or homogeneous. For instance, if you wanted to know the average height of employees in your company, you could randomly select a group and measure their height.

- Benefits: Simple random sampling gives everyone an equal chance to be chosen for the sample. It’s fair and helps avoid bias in the selection process.

- Limitations: Simple random sampling might not show the differences between smaller groups in the population. We might miss important details about specific parts of the group.

Systematic sampling is like playing hopscotch with your data. You select every ‘nth’ member in your population. This method is simple and effective, especially for large groups. Let’s say you wanted to investigate the reading habits of employees in your office. You could select every 5th employee from the company’s register and survey them about their reading preferences.

- Benefits: Systematic sampling is easy to do and doesn’t require random numbers. It works well when there is a pattern or order in the population.

- Limitations: If there is a hidden pattern in the population, systematic sampling might give us the wrong results. It might not capture everyone’s differences if they don’t fit the pattern.

Stratified sampling is like sorting your group into smaller teams or ‘strata’ and picking from each one. This method is handy when your group is varied or heterogeneous. Suppose you wanted to understand the opinions of workers in a factory regarding salary rates. You could divide them into different age groups (strata) and randomly select a few from each group to represent their opinions.

- Benefits: Stratified sampling helps us include different groups or types of people in our sample. We can learn about each group separately and get more accurate information.

- Limitations: Stratified sampling takes time and planning because we need to know about the different groups in the population. It might not show us all the differences within each group.

Imagine your group is a large pizza spread across a wide area. Cluster sampling is when you take a few ‘slices’ (clusters) to study. This method is ideal when the group is large and geographically dispersed. For instance, if you wanted to investigate the pollution levels in your city, you could randomly select a few neighborhoods (clusters) and measure the air quality in each of them.

- Benefits: Cluster sampling is good when people are spread out or grouped together in certain areas. It saves time and effort by selecting groups instead of individuals.

- Limitations: Cluster sampling might not give us the same level of accuracy as other methods. We might miss out on the differences between individuals within each group.

Quota sampling: Meeting targets

Quota sampling is like setting a goal. Let’s say you wanted to interview ten people from each age group to understand their preferences for video games. You would make sure you reach your target by selecting participants from each age group until you meet the quota. This method ensures you get a certain amount of data from different segments of your group.

- Benefits: Quota sampling lets us choose a specific number of people from different groups. We can make sure our sample represents the diversity in the population.

- Limitations: Quota sampling relies on the researcher’s judgment, which can introduce bias. It might not capture all the differences within each group.

Snowball sampling involves asking a friend, who asks a friend, and so on, to participate in your study. This method is often used when you have a hard-to-reach population. Suppose you wanted to understand the social media habits of teenagers in your city. You could start by surveying a few teenagers you know and then ask them to recommend other participants. This way, the network of participants grows like a snowball rolling down a hill.

- Benefits: Snowball sampling helps us study people who are hard to reach or find. We can learn about hidden or rare groups by asking participants to refer others.

- Limitations: Snowball sampling might not give us a complete picture of the whole population. The people referred by participants might have similar characteristics, so we might miss out on other perspectives.

Enhancing Sound System Quality through Data Sampling

In the bustling world of consumer electronics, Sarah Mitchell, a seasoned corporate professional, found herself tasked with a critical project: conducting a statistical study to enhance the sound quality of a cutting-edge sound system. Her journey would involve navigating the intricate realm of acoustics and audio engineering, all while ensuring the accuracy of her data through well-designed sampling techniques.

Sarah’s foray into this project began with immersing herself in the world of sound systems. She attended industry expos, collaborated with audio engineers, and even spent time in recording studios to grasp the intricacies of sound propagation and its impact on user experience. Recognizing that sound quality was influenced by a multitude of variables, Sarah meticulously categorized them. These included speaker configuration, room acoustics, frequency response, and audio formats. Her goal was to ensure her data sampling encompassed the full spectrum of influences on sound quality.

Sarah knew that a balanced and representative sample size was essential to yield meaningful insights. Collaborating with her team, she decided to collect data from a diverse range of environments – from home entertainment setups to commercial theaters. This cross-sectional approach would ensure her findings were applicable across various scenarios. With a wide array of potential participants, Sarah needed a methodical approach to select the right ones. She identified audio enthusiasts, sound engineers, and casual users as her target group. By incorporating a mix of expertise levels, she aimed to capture a holistic understanding of sound quality perception.

Understanding the importance of reducing bias, Sarah ensured that her sampling process was randomized. This prevented any conscious or unconscious bias in selecting participants or environments. By randomly selecting participants and settings, she minimized the risk of skewing her results. Sarah recognized that sound quality could be assessed through both objective measurements and subjective perceptions. She leveraged advanced audio measurement tools to gather objective data, such as frequency response curves and signal-to-noise ratios. Additionally, she designed surveys to capture participants’ subjective perceptions of sound quality, allowing her to compare technical measurements with real-world experiences.

As data poured in from a multitude of settings and sources, Sarah collaborated closely with her team of data analysts. They employed statistical techniques to identify correlations between different variables and sound quality perception. Through careful analysis, they unveiled patterns that indicated how specific factors influenced user preferences. Sarah’s dedication didn’t stop with data collection and analysis. She recognized that her findings needed to be applied in a real-world context. Collaborating with the engineering team, she translated the insights into actionable design changes for the sound system. These iterative improvements were informed by the data she had meticulously collected.

As the new and improved sound systems hit the market, they garnered praise for their enhanced audio quality. The refinements made based on Sarah’s study findings translated into richer, clearer sound experiences across a variety of environments. Her comprehensive approach to data sampling and analysis had not only influenced product development but had also set a precedent for evidence-based design decisions within the company. Sarah Mitchell’s journey through the world of sound systems, guided by her expertise in corporate strategy, resulted in a transformative statistical study. Her case study serves as a testament to the power of well-designed data sampling techniques in driving innovation and delivering tangible improvements to consumer products.